Quadrilateral Interpolation, Part 2

It’s been quite a while since the first entry in this series! I apologize for the long delay—at the time, I’d intended to write at least one more entry, but I couldn’t get the math to work and lost interest. However, I recently had occasion to revisit this topic, and this time was able to make progress.

In this article, I’ll cover bilinear interpolation on quadrilaterals. Unlike the projective interpolation covered in Part 1, this method will allow us to maintain regular UV spacing along all four of the quad’s edges, regardless of its shape; but we’ll see that to achieve this, we’ll have to accept a different kind of distortion to the texture.

The Story So Far

The central problem of this series is: how can we map a rectangular texture image onto an arbitrary convex quadrilateral?

If we model the quad as two triangles, and apply ordinary (linear) texture mapping to the mesh, we get something like this:

There’s a visible seam at the edge between the two triangles, where the derivatives of the mapping change abruptly. We could improve the situation by subdividing the quad more finely, and assigning appropriate UVs to the interior vertices; but perhaps we can’t or don’t wish to do that. Instead, we’re looking at alternative methods for interpolating the UVs to avoid this problem altogether.

In part 1, I looked at projective interpolation, which produces results like this:

This method, based on perspective projection, succeeds in removing the visible seam between triangles in a quad. Unfortunately, it has a couple of other issues. First, the perspective-like transformation tends to produce an unwanted “3D” effect, where a 2D quad that’s flat on the screen comes to look like a 3D rectangle stretching off into the distance. Second, the UV spacing becomes nonuniform along the edges of the quad—which introduces a $C^0$ seam between adjacent quads, even worse than the original $C^1$ seams we were trying to fix!

Bilinear Interpolation

If you’re reading this, you’re probably familiar with bilinear interpolation in the context of texture sampling. At a point between texel centers, the sampling result is a blend of all four of the nearest texels.

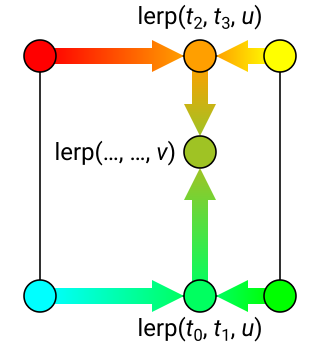

This can be expressed mathematically as follows. If $t_0 \ldots t_3$ are the four texel colors, the interpolated result is: $$\begin{aligned} t(u, v) &= \text{lerp}\bigl(\text{lerp}(t_0, t_1, u), \text{lerp}(t_2, t_3, u), v\bigr) \\ &= (1-u)(1-v) t_0 + u(1-v) t_1 + (1-u)v t_2 + uv t_3 \end{aligned}$$

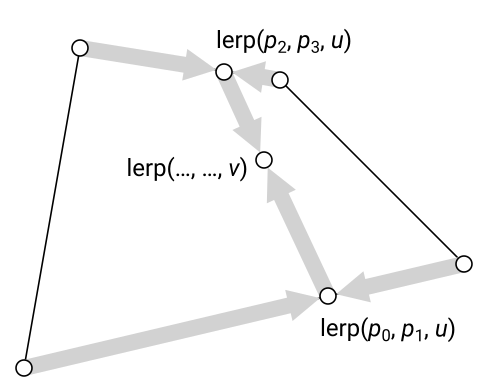

Let’s now define bilinear interpolation for a quadrilateral exactly the same way, except that instead of four texel colors, we’ll have the four vertices of the quad. If the vertex positions are $p_0 \ldots p_3$, then the position corresponding to a given UV on the quad is $$ p(u, v) = \text{lerp}\bigl(\text{lerp}(p_0, p_1, u), \text{lerp}(p_2, p_3, u), v\bigr) $$

This defines the forward UV mapping—from UV to position on the quad’s surface. To actually implement this technique, we’re going to need to invert this equation, so we can write a pixel shader that maps the pixel’s position back to the UV at which to sample the texture. I’ll show how to do that a bit later; but first, let’s have a look at the results.

As hoped, bilinear interpolation both hides the join between the two triangles in each quad, and keeps uniform spacing along the edges so that the texture will match between adjacent quads. (There’s still a $C^1$ seam between the quads, where the mapping derivatives jump; but that’s unavoidable as long as we insist that the texture completely fill the quad.)

Properties

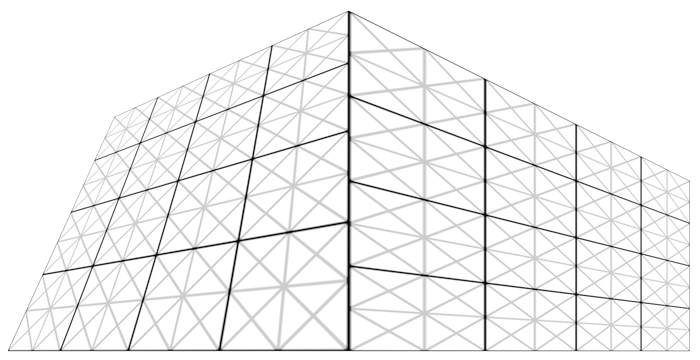

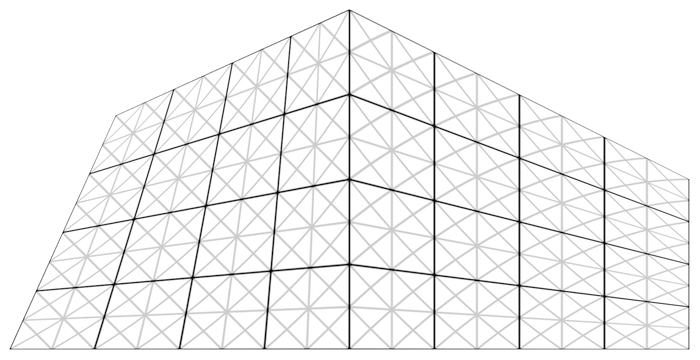

Despite the downsides of projective interpolation, it does have one nice feature: it preserves straight lines. Any line in the original texture space will be mapped to another line by an arbitrary projective transform. In the transformed grid below, note how all the horizontal, vertical, and diagonal lines are still straight:

In bilinear interpolation, this is no longer the case—some lines in the original texture space now come out curved:

The curvature introduced by the mapping is orientation-dependent. All the horizontal and vertical lines from the original grid are still straight; this is because the bilinear interpolation formula reduces to linear interpolation when either $u$ or $v$ is held fixed. However, most diagonal lines will be mapped to curves. In particular, they’ll be mapped to quadratic splines, since the bilinear interpolation formula becomes quadratic (in the general case) when $u$ and $v$ are held proportional to each other.

Depending on the texture, this effect may not be very noticeable. In textures that don’t have a lot of line-like features to begin with, or whose line-like features are mostly vertical and horizontal (e.g. bricks), the distortion of diagonals is pretty hard to see.

By the way, the fact that bilinear interpolation creates quadratic splines along diagonals can be exploited to evaluate splines in a GPU texture unit.

Inversion

(If you prefer not to wade through the mathy details and just want to see the code, feel free to jump down to the Implementation section. In my derivation here, I’m indebted to this article by Íñigo Quílez.)

As seen above, the bilinear interpolation setup gives us an expression for the position of a point in terms of its $u, v$ coordinates within the quad. Let $p_0 \ldots p_3$ be the quad’s vertices (in whatever space the quad is defined—model space, world space, screen space). Then the position corresponding to a given $u, v$ is: $$\begin{aligned} p(u, v) &= \text{lerp}\bigl(\text{lerp}(p_0, p_1, u), \text{lerp}(p_2, p_3, u), v\bigr) \\ &= (1-u)(1-v) p_0 + u(1-v) p_1 + (1-u)v p_2 + uv p_3 \end{aligned}$$ However, we’ll need to invert this equation in order to apply it in a pixel shader: we need to calculate the UV at which to sample the texture (we can’t pass it down from the vertex shader because that would give us linear interpolation, not bilinear). So we need to solve for $u, v$ in terms of $p$, the pixel’s position within the quad.

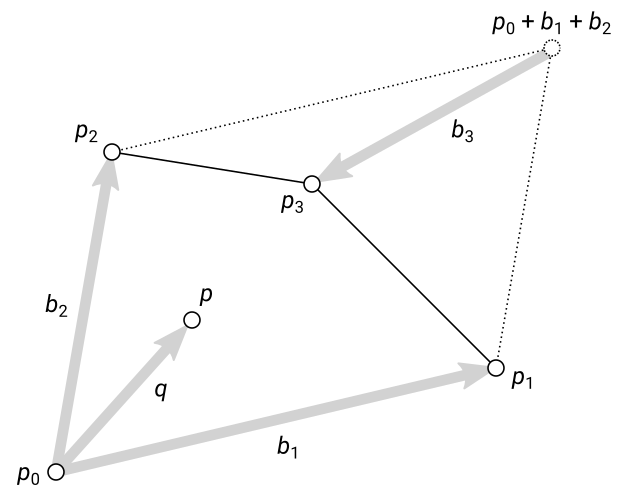

First, let’s multiply out and regroup terms: $$ 0 = (p_0 - p) + (p_1 - p_0) u + (p_2 - p_0) v + (p_0 - p_1 - p_2 + p_3) uv $$ The four vectors in parentheses here can readily be interpreted geometrically. We have the pixel’s position relative to the origin of UV space; the two UV basis vectors; and one more, $p_0 - p_1 - p_2 + p_3$. This vector expresses how the quad deviates from being a parallelogram. It is the vector difference between the quad’s final point $p_3$ and where that point would be to complete the parallelogram spanned by the UV basis vectors:

For convenience, let’s give names to these vectors: $$\begin{aligned} q &\equiv p - p_0 \\ b_1 &\equiv p_1 - p_0 \\ b_2 &\equiv p_2 - p_0 \\ b_3 &\equiv p_0 - p_1 - p_2 + p_3 \end{aligned}$$ Then the equation we’re solving becomes: $$ 0 = -q + b_1 u + b_2 v + b_3 uv \qquad (*) $$ Now let’s solve for $u$ in terms of $v$: $$\begin{aligned} q - b_2 v &= (b_1 + b_3 v) u \\ u &= \frac{q - b_2 v}{b_1 + b_3 v} \end{aligned}$$ But wait! These quantities are vectors! Didn’t your mother ever teach you that you cannot divide vectors? Don’t worry, folks; I know geometric algebra. 😄

In seriousness: when we’ve correctly solved for $v$, then the numerator and denominator of this fraction must be parallel, so we can divide them to recover $u$ (and it will be a scalar). To implement this in practice, we’ll just pick one of the vector’s coordinate components to do the calculation with.

Onward to solving for $v$. We can eliminate $u$ from equation $(*)$ by wedging both sides with $b_1 + b_3 v$. (In case you’re not familiar with the wedge product, it’s a tool from Grassmann algebra, part of geometric algebra. For our purposes here, you can treat it as the signed area of the parallelogram spanned by two vectors. When you wedge a vector with itself, you get zero.) $$\begin{aligned} 0 &= (-q + b_1 u + b_2 v + b_3 uv) \wedge (b_1 + b_3 v) \\ &= (b_1 \wedge q) + (b_3 \wedge q - b_1 \wedge b_2) v + (b_2 \wedge b_3) v^2 \end{aligned}$$ We now have a quadratic equation in $v$ and we can apply the usual quadratic formula. (Wait! These quantities are bivectors! One cannot simply apply the quadratic formula to bivectors! It’s okay, since these bivectors all lie in the plane of the quad and are therefore proportional to one other. Again, in practice we’ll just look at one coordinate component of the bivectors.)

The two possible solutions to $v$ are: $$ v = \frac{-B \pm \sqrt{B^2 - 4AC}}{2A}, \qquad \begin{aligned} A &\equiv b_2 \wedge b_3 \\ B &\equiv b_3 \wedge q - b_1 \wedge b_2 \\ C &\equiv b_1 \wedge q \end{aligned} $$ In practice, the discriminant is always positive inside the quad; also, only one of the two roots is needed—which one depends on the winding of the quad (and on the coordinate system conventions). Once you have the correct $v$, plug it into the formula for $u$ and you’re done.

Implementation

Translating all this into shader code is fairly straightforward. We set up the $q, b_1, b_2, b_3$ vectors in the vertex shader, then solve for $u, v$ in the pixel shader.

One complication is that these vectors need to be calculated per quad, so you can’t have vertices shared between quads. If you’re applying this to a mesh, it will need to be “unwelded” so that each quad has distinct vertices. (You can still share vertices between the two triangles in each quad.)

Each vertex shader invocation also needs to know all four vertices of the quad it belongs to. To avoid duplicating all the vertex positions many times in memory, we can use instancing: one instance per quad, with the per-quad parameters stored in the instance vertex buffer (very similar to rendering billboard particles).

Here’s what the shader might look like in pseudo-HLSL, for a 2D case where the quad is always in the $xy$ plane:

struct InstData

{

float2 p[4]; // Quad vertices

};

struct V2P

{

float4 pos : SV_Position;

float2 q, b1, b2, b3;

};

void Vs(

in uint iVtx : SV_VertexID,

in InstData inst,

out V2P o)

{

o.pos = inst.p[iVtx];

// Set up inverse bilinear interpolation

o.q = o.pos - inst.p[0];

o.b1 = inst.p[1] - inst.p[0];

o.b2 = inst.p[2] - inst.p[0];

o.b3 = inst.p[0] - inst.p[1] - inst.p[2] + inst.p[3];

}

float Wedge2D(float2 v, float2 w)

{

return v.x*w.y - v.y*w.x;

}

void Ps(

in V2P i,

out float4 color : SV_Target)

{

// Set up quadratic formula

float A = Wedge2D(i.b2, i.b3);

float B = Wedge2D(i.b3, i.q) - Wedge2D(i.b1, i.b2);

float C = Wedge2D(i.b1, i.q);

// Solve for v

float2 uv;

if (abs(A) < 0.001)

{

// Linear form

uv.y = -C/B;

}

else

{

// Quadratic form. Take positive root for CCW winding with V-up

float discrim = B*B - 4*A*C;

uv.y = 0.5 * (-B + sqrt(discrim)) / A;

}

// Solve for u, using largest-magnitude component

float2 denom = i.b1 + uv.y * i.b3;

if (abs(denom.x) > abs(denom.y))

uv.x = (i.q.x - i.b2.x * uv.y) / denom.x;

else

uv.x = (i.q.y - i.b2.y * uv.y) / denom.y;

color = tex.Sample(samp, uv);

}

Conclusion

Bilinear interpolation solves the problem of mapping a rectangular texture to an arbitrary quad, with a different set of trade-offs from the projective mapping we saw previously. On the plus side, bilinear interpolation doesn’t produce as much of a faux-3D effect, and it always maintains uniform UV spacing along the quad’s edges. On the other hand, it introduces curved diagonals, and it also has a more complicated (and more expensive) pixel shader than projective interpolation.

We’ve eliminated the seam between the two triangles in a quad, but one lingering issue is the seam between adjacent quads in a mesh. It would be nice if we could have some control over the tangents of the UV mapping along the edge, so we could force them to match across that join. But that’s a topic for another day!