Reading Veach’s Thesis

If you’ve studied path tracing or physically-based rendering in the last twenty years, you’ve probably heard of Eric Veach. His Ph.D thesis, published in 1997, has been hugely influential in Monte Carlo rendering. Veach introduced key techniques like multiple importance sampling and bidirectional path tracing, and clarified a lot of the mathematical theory behind Monte Carlo rendering. These ideas not only inspired a great deal of later research, but are still used in production renderers today.

Recently, I decided to sit down and read this classic thesis in full. Although I’ve seen expositions of the central ideas in other places such as PBR, I’d never gone back to the original source. The thesis is available from Stanford’s site (scroll down to the very bottom for PDF links). It’s over 400 pages—a textbook in its own right—but I’ve found it very readable, with clearly presented ideas and incisive analysis. There’s a lot of formal math, too, but you don’t really need more than linear algebra, calculus, and some probability theory to understand it. I’m only about halfway through, but there’s already been some really interesting bits that I’d like to share. So hop in, and let’s read Veach’s thesis together!

This isn’t going to be a comprehensive review of everything in the thesis—it’s just a selection of things that made me go “oh, that’s cool”, or “huh! I didn’t know that”.

Unbiased vs Consistent Algorithms

You’ve probably heard people talk about “bias” in rendering algorithms and how unbiased algorithms are better. Sounds reasonable, bias is bad and wrong, right? But then there’s this other thing called “consistent” that algorithms can be, which makes them kind of okay even if they’re biased? I’ve encountered these concepts in the graphics world but never really saw a clear explanation of them (especially “consistent”).

Veach has a pretty nice one-page explanation of what this is and why it matters (§1.4.4). Briefly, bias is when the mean value of the estimator is wrong, independent of the noise due to random sampling. “Consistent” is when the algorithm’s bias approaches zero as you take more samples. An unbiased algorithm generates samples that are randomly spread around the true, correct answer from the very beginning. A consistent algorithm generates samples that are randomly spread around a wrong answer to begin with, but then they migrate toward the right answer over time.

The reason it matters is that with an unbiased algorithm, you can track the variance in your samples and get a good idea of how much error there is, and you can accurately predict how many samples it’s going to take to get the error down to a given level. With a biased but consistent algorithm, you could have a situation where it looks like it’s converged because the samples have low variance, but it’s converged to an inaccurate value. You have no real way to detect that, and no way to tell how many more samples might be necessary to achieve a given error bound.

Photon Phase Space

The classic Kajiya rendering equation deals with this quantity called “radiance” that’s notoriously hard to get a handle on, both intuitively and mathematically. We’re usually shown a definition that has some derivative-looking notation like $$ L = \frac{\mathrm{d}^2\Phi}{\mathrm{d}A \, \mathrm{d}\omega \cos \theta} $$ which, like, what? What is the actual function that is being differentiated here? What are the variables? What does this even mean?

If you’re the sort of person who feels more secure when things like this are put on an explicit, formal mathematical footing, Chapter 3 is for you. Veach takes it back to physics by defining a phase space (state space) for photons. Each photon has a position, direction, and wavelength, so the phase space is 6-dimensional (3 + 2 + 1). We can imagine the photons in the scene as a cloud of points in this space, moving around with time, spawning at light sources and occasionally dying when absorbed at surfaces.

Then, all the usual radiometric quantities like flux, irradiance, radiance, and so on can be defined in terms of measuring the density of photons (or rather, their energy density) in various subsets of this space. For example, radiance is defined in terms of the photons flowing through a given patch of surface, with directions within a given cone, and then taking a limit as the surface patch and cone sizes go to zero. This kind of limiting procedure is formalized using measure theory, as a Radon–Nikodym derivative.

Incident and Exitant Radiance

Another thing we get from this notion of photon phase space is a precise distinction between incident and exitant radiance. The rendering equation describes how to calculate $L_o$ (exitant radiance, leaving the surface) in terms of the BSDF and $L_i$ (incident radiance, arriving at the surface). But then how are these $L_o$ and $L_i$ related to each other? There’s just one unified radiance field, not two; but trying to define it as a function of position and direction, $L(x, \omega)$, we run into some awkwardness at points on surfaces because the radiance changes discontinuously there.

Veach §3.5 gives a nice definition of incident and exitant radiance functions in terms of the photon phase space, by looking at trajectories moving toward the surface or away from it in time. (To be fair, I think this could be done as well by looking at one-sided limits of the 3D radiance field as you approach the surface from either direction.)

Reciprocity and Adjoint BSDFs

Much of the thesis in Chapters 4–7 is concerned with how to handle non-reciprocal BSDFs—or, as Veach calls them, non-symmetric. We’re often told that BSDFs “should” obey a reciprocity law, $f(\omega_i \to \omega_o) = f(\omega_o \to \omega_i)$, in order to be well-behaved. However, Veach points out that non-reciprocal BSDFs are commonplace and unavoidable in practice:

- Refraction is non-reciprocal (§5.2). Radiance changes by a factor of $\eta_o^2 / \eta_i^2$ when refracted (more about this in the next section); reverse the direction of light, and it inverts this factor.

- Shading normals are non-reciprocal (§5.3). Shading normals can be interpreted as a factor $|\omega_i \cdot n_s| / |\omega_i \cdot n_g|$ multiplied into the BSDF. Note that this expression involves only $\omega_i$ and not $\omega_o$, so if those directions are swapped, this value will in general be different.

Does this spell doom for physically-based rendering algorithms? Surprisingly, no. According to Veach, it just means we have to be careful about the order of arguments to our BSDFs, and not treat them as interchangeable. The rendering will still work as long as we’re consistent about which direction light is flowing. (It’s a bit like working with non-commutative algebra; you can still do most of the same things, you just need to take care to preserve the order of multiplications.)

For photon mapping or bidirectional path tracing, we might need two separate importance-sampling routines: one to sample $\omega_i$ given $\omega_o$ (when tracing from the camera) and one to sample $\omega_o$ given $\omega_i$ (when tracing from a light source).

Another way to think about it is that light is emitted and scatters through the scene, it uses the regular BSDF, but when “importance” is emitted by cameras and scatters through the scene, it uses the adjoint BSDF—which is just the BSDF with its arguments swapped (§3.7.6). Then both directions of scattering give consistent results and can be intermixed in algorithms.

Non-Reciprocity of Refraction

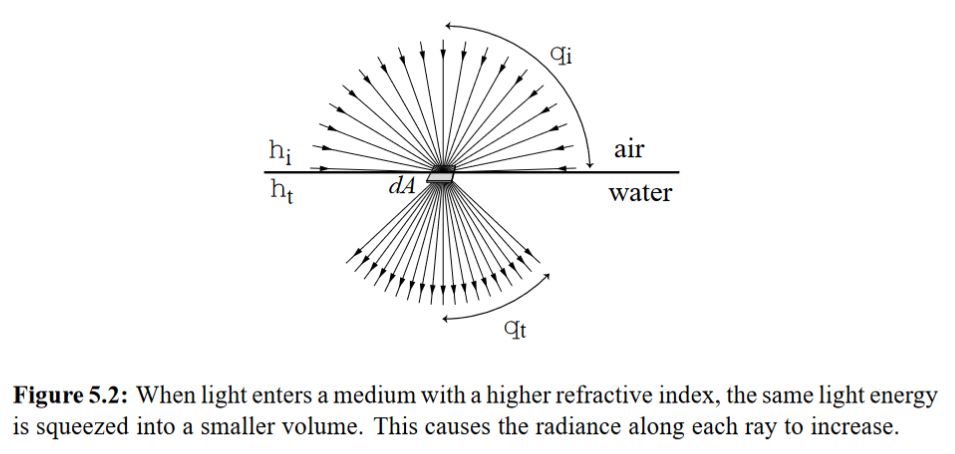

I was not previously aware that radiance should be scaled by $\eta_o^2 / \eta_i^2$ when a ray is refracted! This fact somehow skipped me by in everything I’ve read about physically-based light transport (although when I looked, I found PBR discussing this issue in §16.1.3). The radiance changes because light gets compressed into a smaller range of directions when refracted, as this diagram (excerpted from the thesis) shows:

So, a ray entering a glass object should have its radiance more than doubled. However, the scaling is undone when the ray exits the glass again. That explains why you can often get away without modeling this radiance scaling in a renderer; if the camera and all light sources are outside of any refractive media, there’s no visible effect. This would only show up if, for instance, some light sources were inside a medium—and would only show up as those light sources being a little dimmer than they should be, which would be easy to overlook (and easy for an artist to compensate by bringing those lights up a bit).

However, the radiance scaling does become important when we use things like photon mapping and bidirectional path tracing, where we have to use the adjoint BSDF when tracing from the light sources. Then, the $\eta^2$ factors apply inversely to these paths, which is important to get right, or else the bidirectional methods won’t be consistent with unidirectional ones.

Veach also derives (§6.2) a generalized reciprocity relationship that holds for BSDFs with refraction (in the absence of shading normals): $$ \frac{f(\omega_i \to \omega_o)}{\eta_o^2} = \frac{f(\omega_o \to \omega_i)}{\eta_i^2} $$ He proposes that instead of tracking radiance $L$ along paths, we instead track the quantity $L/\eta^2$. When BSDFs are written with respect to this modified radiance, the $\eta^2$ factors cancel out and the BSDF becomes symmetric again. In this case, no scaling needs to be done as the ray traverses different media, and paths in both directions can operate by the same rules; only at the ends of the path (at the camera and at lights) do some $\eta^2$ factors need to be incorporated. Veach argues that this a simpler and easier-to-implement approach to path tracing overall.

It’s interesting to note, though, that PBRT doesn’t take Veach’s suggested approach here; it tracks unscaled radiance, and puts in the correct scaling factors due to refraction, for paths in both directions.

Conclusion

The refraction scaling business was the most surprising point for me in what I’ve read so far, but Veach’s argument for non-symmetric scattering being OK as long as you take care to handle it correctly was also very intriguing!

That brings us to the end of Chapter 7, which is about halfway through. The next chapters are about multiple importance sampling, bidirectional path tracing, and Metropolis sampling. I hope this was interesting, and maybe I’ll do a follow-up post when I’ve finished it!