Artist-Friendly HDR With Exposure Values

Unless you’ve been living under a rock, you know that HDR and physically-based shading are practically the defining features of “next-gen” rendering. A new set of industry standard practices has emerged: RGBA16f framebuffer, GGX BRDF, filmic tonemapping, and so on. The runtime rendering/shading end of these techniques has been discussed extensively, for example in John Hable’s talk Uncharted 2: HDR Lighting and the SIGGRAPH Physically-Based Shading courses. But what I don’t see talked about so often is the other end of things: how do you acquire and author the HDR assets to render in your spiffy new physically-based engine?

In this article, I’d like to offer some thoughts on the subject of making HDR rendering as artist-friendly as possible.

Exposure Values

When building an HDR authoring workflow, the first problem you have to solve is: how do you represent the enormous range of luminances numerically in a way that’s friendly for people to view and edit? The human eye has a dynamic range of something like a billion (though not in a single scene). However, when lighting artists go to set the brightness of a light, a neon sign, or the sky, it’s a bit unkind to ask them to specify a number between 1 and 1000000000.

Fortunately, photographers have been dealing with this issue for decades and have come up with a solution: a base-2 logarithmic scale known as exposure value, or EV. This started out as a tool for helping photographers decide which f-stop and shutter speed to use for a shot, but it’s evolved into a unit of measurement for luminance—analogous to decibels for sound pressure level. Like decibels, EV uses a logarithmic scale, but with base 2 instead of base 10. One EV step corresponds to a factor of two in luminance, so +1 EV is twice as bright as 0 EV, +2 EV is four times as bright, and so on.

Because our senses work approximately logarithmically as well, EV has the benefit of being a much more perceptually uniform scale than raw luminance. The difference between +1 and +2 EV looks about the same as that between 0 and +1 EV to our eyes.

An EV of 0 corresponds to a luminance of 0.125 cd/m². Note that 0 EV is not zero light; it’s about the level of ambient light in a bedroom illuminated only by a flashlight. Negative EVs are also valid—and necessary to represent darker scenes.

EVs are also found in Photoshop uses when you work in 32-bit mode; its exposure sliders are measured in EV, although they’re not labeled as such. It’s convenient to have Photoshop’s sliders and the sliders in your tools and engine behave consistently with each other.

Fitting Into Half-Float

RGBA16F is a good choice for an HDR framebuffer these days. It does have a greater memory and bandwidth cost of 64 bits per pixel, but it’s rather nicer than having to deal with the limitations of RGBM, LogLUV, 10-10-10-2 or any of the other formats that have been devised for shoehorning HDR data into 32 bits.

Being able to store everything in linear color space, use hardware blending and filtering, etc. is great—but the half-float format does come with a caveat: it doesn’t have a huge range. The maximum representable half-float value is 65504, and the minimum positive normalized value (note that GPU hardware typically flushes denormals to zero) is about 6.1 × 10−5. The ratio between them is about 1.1 × 109, which is right about the dynamic range of the human eye. So half-float barely has enough range to represent all the luminances we can see, and this range must be husbanded carefully.

The half-float normalized range natively extends from −14 to +16 EV. It turns out that +16 EV is about the luminance of a matte white object in noon sunlight, so this range isn’t quite convenient—there’s not enough room on the top end to represent really bright things we can see, like a specular highlight in noon sunlight, or the sun itself. Fortunately, there’s plenty of room at the low end: −14 EV is a ridiculously dark luminance, probably comparable to intergalactic space!

To fix this, you can simply shift all luminances by some value, say −10 EV, when converting them to internal linear RGB values. This gives an effective range of −4 to +26 EV, which more neatly brackets the range of luminances you’ll actually want to depict, and allows enough headroom for super-bright things. (Looking directly at the sun at noon, you’d actually see a luminance of more like +33 EV—but I figure that +26 EV is good enough in practice.)

Whenever you display EV to the user you’d undo this shift, so that the engine’s notion of EV continues to match up with the photography definition. You’ll also probably want to remove it if you generate HDR screenshots, lightmaps, etc. from the engine, so they won’t appear extremely dark when viewed externally. And when the lighting artist specifies the overall scene EV for tonemapping, the shift will of course need to be accounted for there as well.

Color Picking

Naturally, all color data will be stored internally in linear RGB space with the brightness factored in. However, when you interact with users in tools or in the engine, colors will need to be converted to/from the friendlier EV representation. Artists can set the brightness of lights, emissive textures, and so on using an EV slider that acts as a multiplier on the RGB values.

In this system, user-visible colors effectively have four components: RGB, and the EV multiplier. The RGB components are still limited to [0, 1]—attempting to set each component as an HDR value in EV individually would be far too confusing; it’s a better user experience to separate color from brightness, so artists can tweak one without accidentally altering the other.

It may make sense to keep the RGB part in gamma space; it’s more perceptually uniform, plus the color values of lights and so forth can be easily interchanged between the engine and external software such as Photoshop. The color components can also be set using HSV or another color space, instead of RGB.

This does mean that it’s possible to represent the same color in multiple ways—for instance, a gray of 50% (linear) can be represented by either an RGB of 73% (gamma) and EV of zero, or by an RGB of 100% and EV of −1.

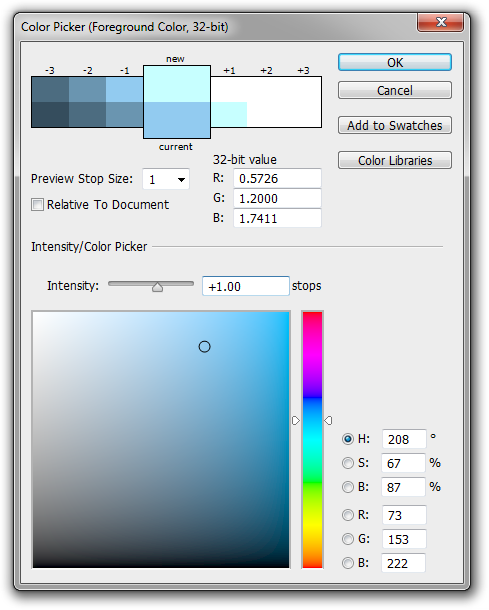

Photoshop uses this same scheme; in 32-bit mode, its color picker consists of the standard LDR RGB/HSV color picker, plus an “intensity” slider that’s measured in “stops”. Again, though it doesn’t explicitly say so, this slider is measured in EV and multiplies the LDR color.

To sum, the conversion between the user-visible representation and the internal linear RGB space would look something like this:

float3 internalRGB = gammaToLinear(userRGB) * pow(2.0, userEV - 10.0);

The inverse conversion, with the EV part set by the greatest component of the color:

float multiplier = maxComponent(internalRGB);

float3 userRGB = linearToGamma(internalRGB / multiplier);

float3 userEV = log2(multiplier) + 10.0;

HDR Light Values

In a physically-based renderer, it seems to make sense to specify the brightness of light sources in physical units of power, i.e. watts. This has the benefit of being intuitive, since many people have a general idea of how bright a 100-watt light bulb is in reality, for instance.

The trouble with using watts is that different kinds of light sources—such as incandescent, fluorescent, and LED lights—have very different efficiency levels, so there is no consistent relationship between power and brightness. A 100-watt incandescent bulb puts out about the same amount of visible light as a 20-watt CFL or LED bulb.

We can use lumens instead; this is a unit like watts, but which counts only visible light, weighted by the human luminous efficiency function. Lumens are less familiar to most people (at least in the USA), but with a bit of practice one can develop intuition for this unit.

However, there’s still a few issues here:

- While watts or lumens are sensible units for a finite, bounded light source, they don’t work for directional lights or the sky, which are at infinity and would thus emit infinitely many lumens. You’ll have to use a different unit for these, breaking consistency.

- You still have the problem of a wide range of brightnesses—from candles or flashlights with ~10 lumens up to stadium floodlights with ~100,000 lumens. Granted, it’s not as bad as a dynamic range of a billion, but it’s still an annoyingly large range.

- It’s hard to measure the total luminous flux output by a real-world light source, so it’s hard to use real-world sources as reference for calibrating in-game values.

For all these reasons, I think it’s generally better to specify light source values using EV. For area lights, including the sky, you can specify the emissive EV of the surface itself. If the total luminous flux is needed, it can be calculated as π × luminance × area (where luminance is 0.125 cd/m² × 2EV). For punctual lights, including directional lights, you can specify the EV reflected by a diffuse white surface facing the source at a standardized distance. The source’s native brightness can then be calculated by solving its attenuation equation.

Of course, nothing stops you from providing both options in your engine and tools, and converting back and forth between lumens and EV-at-standard-distance on the fly.

HDR Textures

Surprisingly, there aren’t that many cases I’ve seen where HDR textures are needed for an HDR game. One might be worried that HDR lighting environments would exacerbate the precision problems of standard BC1 texture compression, producing crazy banding under bright light. In my experience, this isn’t much of an issue for the usual diffuse, specular, etc. textures.

A case where HDR textures can come in handy is for high-contrast emissive textures, such as neon signs, TV screens, and particles/FX. One can attempt these using ordinary BC1-compressed textures as masks, scaled in the pixel shader by an overall color and brightness (set using an HDR color picker as discussed above), but this really does show off the poor precision of BC1; when the texture’s luminance is raised to a reasonably high level—say, for a bright neon sign at night—the edges of the emissive area can develop a ton of noise and look awful. Bloom will help soften the edges of textures like these, but compression artifacts may still be visible.

From top to bottom: original texture; brightened by +8 EV; and BC1-compressed +8 EV. No bloom. (Font: Neon Lights)

For single-color neon or stencil-like textures (like the one above), distance fields can be very useful. But more generally, BC6 compression is a great choice for emissive textures.

Even with 8-bit source data, BC6 makes a big difference versus BC1. Having 16-bit source data is even better. Note that in Photoshop, 16-bit images are still fixed-point, not float; values are interpreted as lying in [0, 1], and the workflow is just the same as for 8-bit images, except that more bits of precision are kept—even though you can’t see them on your 8-bit display! Therefore, artists don’t have to worry too much about the HDR-ness of these textures; they can just set it to 16-bit at the beginning and work as usual.

Another issue to consider with these high-contrast emissive textures is which downsampling filter to use for creating mipmaps. I think many developers use Lanczos or Kaiser filters to help maintain sharpness in mipmaps; however, when applied to high-contrast emissive textures, the ringing in these filters can become a problem. A Gaussian downsampling filter gives blurrier results, but ones free of ringing.

Authoring textures in full floating-point is probably rarely necessary for an HDR game. One place where they could be potentially useful is with skies, which can contain a large range of brightnesses—especially sunrises/sunsets, and skies with dramatic weather. However, it’s probably possible to get by with 16-bit textures and an HDR multiplier for skies as well. If you do want to try full floating-point textures, fortunately Photoshop today has decent support for working with them in its 32-bit mode. These textures can be compressed with BC6 as well.

Measuring Luminance IRL

Now that we’ve got all this machinery for working with HDR values in terms of EV, all that’s left is to decide what exposure values to actually use. If you’re building a light source or adding an emissive material to your game, how do you know what EV to set it to? Of course, to some extent this is an artistic decision—you make the light as bright as you need it to be for whatever effect you’re creating. However, when creating a realistic game world it’s wise to use real-world luminance values as a reference.

These can be measured using a photographer’s spot meter, such as the Minolta Spotmeter F that I use, shown above. In case you’re not familiar with this device, it’s basically a camera with one big pixel, typically 1° wide, in the center of the FOV. You point it at something and push the button, and it reads out the brightness of the target in EV.

You can find one of the many models of spot meters online for usually $100–200 USD. IMO, every graphics programmer and every lighting artist should have one—they’re incredibly handy! More expensive models also exist that have smaller FOVs (for pinpoint accuracy), and better low-light sensitivity—my Minolta bottoms out at about +1 or +2 EV, which limits its usefulness at night.

When used on an emissive surface (a light bulb, a neon sign, the sky) you can put the measured EV directly into the game engine. In combination with the white card in a Macbeth color checker, or just a piece of matte white paper, you can also measure ambient or directional light: just put the white card in the shadow or in sunlight, and point the spot meter at it. If you add a debug command to spawn a virtual white card in your game engine and look at its pixel value in EV, you can then directly compare these measurements with the values generated by lights in the game. For example, if a given light source produces a reading of +8 EV on a white card 3 meters away, you can reproduce this situation in the game engine and thereby infer the appropriate brightness for the light.

A test scene with lighting calibrated to match real-world reference like this can then be used as a testbed for other things. Texture artists can use it to help find the right diffuse level for materials (especially important for global illumination, as diffuse color affects bounce light). Post-processing passes like tonemapping, bloom and bokeh DOF can be tuned in the testbed as well.

Conclusion

Artists and coders with a photography background may already have some intuition for EVs—but even if you don’t, taking a few trips around town with a spot meter and a white card will help you get a feel for how bright things really are. Those data will also provide useful reference points for setting up in-game lighting environments.

Making your engine and tools understand EV is the icing on the cake: measured values can be used directly in the game, and EV provides a convenient perceptually-uniform scale for lighting artists to set the brightness of lights and emissive materials. They can also set the overall scene exposure for tonemapping in a manner akin to setting up a shot on a real-world camera.

Photographers have used EV for decades to help tame the vast range of luminances that the human eye can perceive, and game developers can take advantage of this invention for our virtual worlds as well.